AI and Data Privacy Compliance

To date, the European Union (EU) has implemented two significant legislations impacting organisations both within and outside the EU: the General Data Protection Regulation (GDPR), which addresses the handling of personal data, and the Artificial Intelligence (AI) Act, which pertains to the development and deployment of artificial intelligence systems.

The AI Act regulates AI technologies based on risk levels, with stricter requirements for high-risk applications like biometric surveillance, credit scoring, hiring algorithms, and medical diagnostics. For businesses, developers, and innovators, understanding these regulations is key to staying compliant, minimising legal risks, and ensuring ethical AI development.

-

GDPR: The foundation of Data Protection

Enacted in 2018, the GDPR applies to any organisation that processes the personal data of individuals within the EU, irrespective of the company's location. This means businesses worldwide must comply if they handle EU residents' data.

Key GDPR Requirements for Businesses and AI developers

Lawful Basis for Data Processing[1]

- Businesses are required to establish a clear legal basis for collecting and processing personal data, which can include obtaining consent, fulfilling contractual obligations, complying with legal requirements, or pursuing legitimate interests. This ensures that data processing is transparent, lawful, and aligned with GDPR principles.

- Businesses must define a valid legal basis for collecting and processing personal data, such as obtaining explicit consent, meeting contractual obligations, adhering to legal mandates, or pursuing legitimate interests. This requirement ensures that data processing is conducted transparently, lawfully, and in accordance with GDPR principles.

Transparency and User Rights[2]

- Organisations must clearly inform individuals about the personal data they collect, how it is used, and whether automated systems, including those capable of decision-making or profiling, are involved in processing that data. GDPR requires transparency, particularly when data is obtained from third-party sources, ensuring individuals are aware of how their information is being handled. This includes providing details on the categories of data processed, the purposes of processing, and any significant effects of automated decision-making, in line with GDPR's broader principles of fairness and accountability.

Data subjects have the right to access[3], rectify[4], erase[5], and restrict processing[6]. Automated Decision-Making[7] and AI

- It should be noted that any AI application that processes personal data must comply with the GDPR to avoid significant regulatory penalties, which can reach up to €20 million or 4% of global annual turnover, whichever is higher. Ensuring alignment with GDPR is crucial to mitigate legal and financial risks.

The EU AI Act: Regulating AI Risks and Compliance

Enacted in 2024, the EU AI Act introduces a regulatory framework governing the development, deployment, and use of AI systems within the EU. In contrast to the GDPR, which centres on individual rights, the AI Act categorises AI systems according to their risk levels and imposes corresponding obligations.

Key Requirements for Businesses, AI Developers, and Innovators

-

AI Risk Classification System[11]

- Unacceptable Risk AI - Banned (e.g., AI for mass surveillance, social scoring).

- High Risk AI[12] - Strict regulations apply (e.g., AI used in finance, recruitment, healthcare).

- Limited and Minimal Risk AI – Fewer compliance obligations but transparency is still required.

-

High-Risk AI Compliance Obligations[13]

- AI systems in critical areas (e.g., hiring, legal decisions, finance) must have human oversight mechanisms.

- Developers must ensure bias detection and mitigation to prevent discrimination.

- AI must be transparent and explainable, allowing users to understand its decisions.

-

Transparency and Disclosure[14]

- Users must be clearly informed when they are interacting with AI, such as chatbots or facial recognition systems.

- AI-generated content (deep fakes, synthetic media) must be clearly labelled.

-

Data and Model Governance[15]

- Businesses must keep detailed records of AI training data, biases, and system performance.

- Developers must validate datasets to prevent discrimination or privacy risks.

-

Enforcement and Penalties[16]

- Fines for non-compliance can reach €35 million or 7% of global revenue for severe violations.

It should be noted that AI developers and businesses must ensure compliance before deploying AI in high-risk environments to avoid regulatory issues and reputational risks.

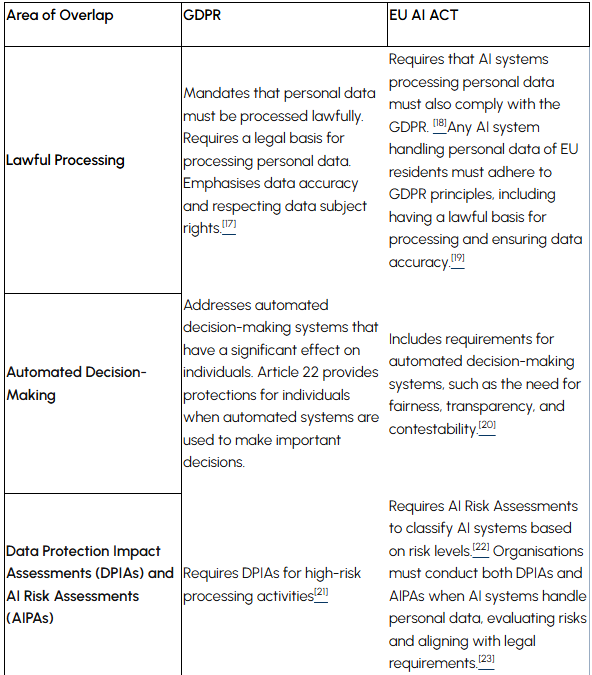

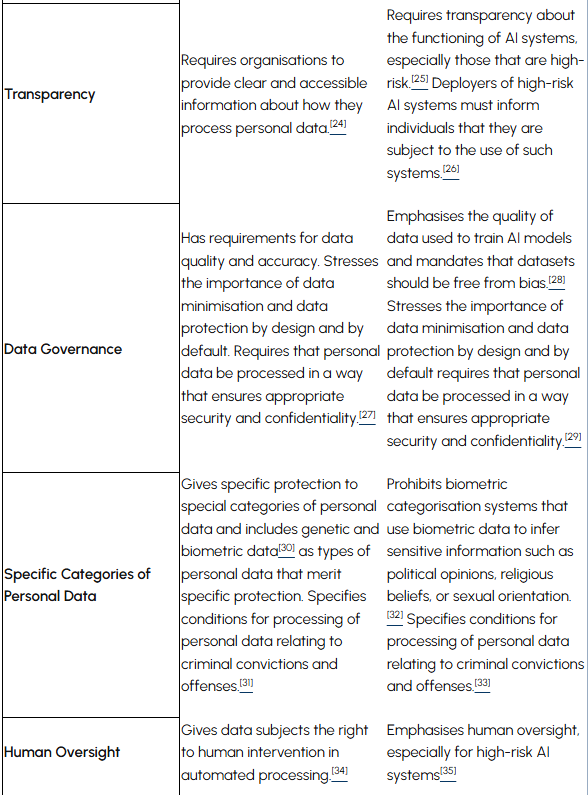

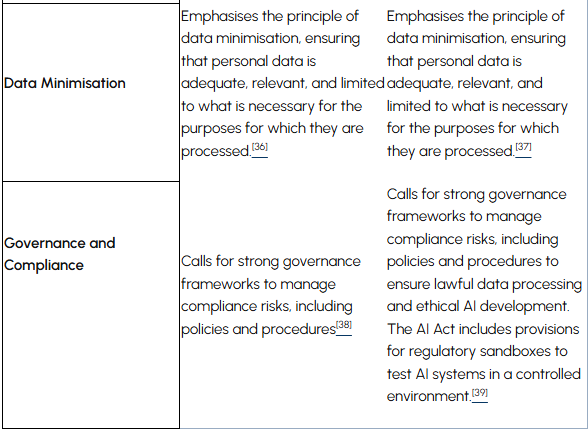

Overlap Between GDPR and the EU AI Act: What Businesses Need to Know

While GDPR focuses on data privacy and the AI Act on AI risk management, businesses need to comply with both when AI systems handle personal data.

Implications for Businesses:

- If your AI system processes personal data, both GDPR and the AI Act apply.

- Automated decision-making must be fair, transparent, and contestable.

- Strong governance frameworks are needed to manage compliance risks.

Compliance Strategies for AI-Driven Businesses

To meet GDPR and AI Act requirements, organisations and entities should:

-

Conduct Data and AI Impact Assessments

- Privacy Impact Assessments for GDPR compliance.[40]

- AI Risk Assessments to classify AI under the AI Act.[41]

-

Implement Human Oversight for High-Risk AI

- Ensure AI decisions affecting individuals have a human-in-the-loop.[42]

- Use explainable AI (XAI) techniques to improve transparency.

-

Strengthen Data Governance & Documentation