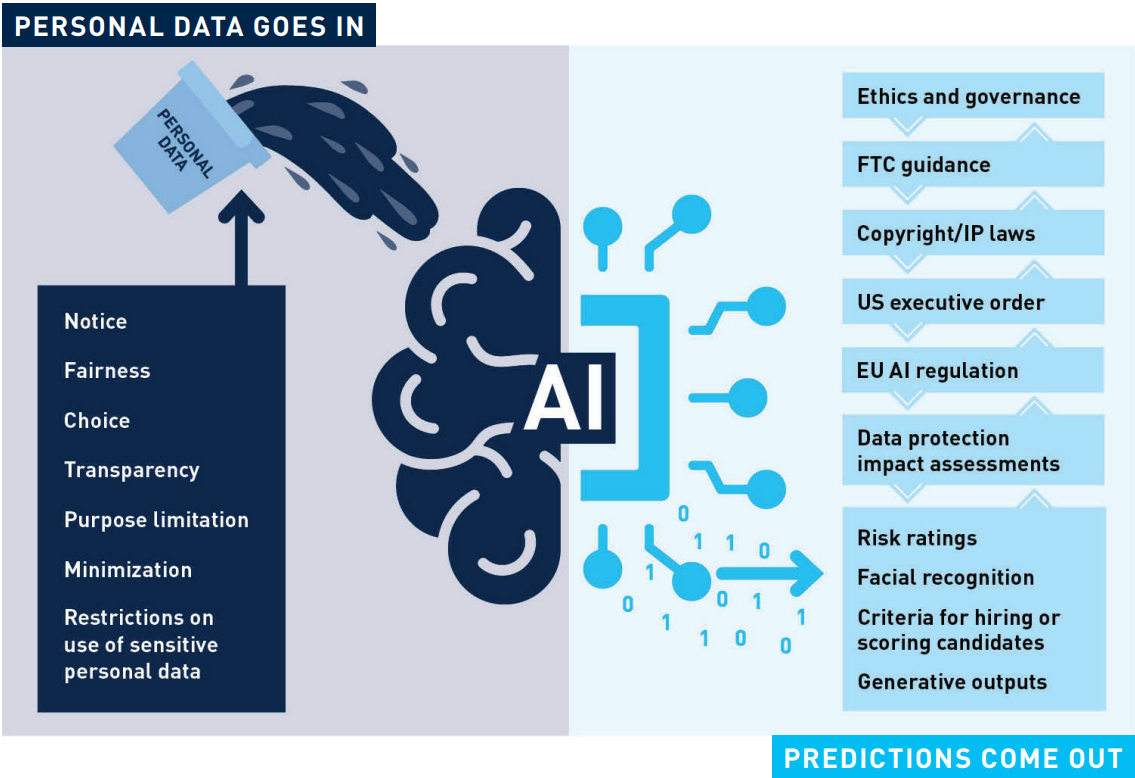

Artificial intelligence (AI) technologies rely on vast amounts of data and compute power for model training to enable them to make predictions or decisions without being explicitly programmed for the task. AI may make us more prosperous and innovative, but it comes with privacy and data protection risks. Organizations that design or deploy AI tools need to investigate how their AI systems collect, process, and disseminate personal data across digital and brick-and-mortar marketplaces.

How Is Personal Data Collected?

It has never been easier to collect and aggregate information about people—as they work, peruse aisles in a grocery store, browse the internet, listen to music at home, and even navigate the physical world through their geolocation data. Al enables organizations to collect, manipulate, and ultimately exchange vast amounts of personal data without the individual’s prior knowledge or consent.

For example, someone may choose a song on a streaming service that matches their mood. Let’s say one of us chose “Coffee Acoustic” as a curated playlist for writing this article. The streaming service shares the “java and chill” impression to online behavior ad markets, sometimes without granular, express consent. The ad purchaser then serves ads based on our Joni Mitchell morning. Predictions will be made about our likely future choices based on this music preference. When we then decide to go to a brick-and-mortar store that had been advertised on the streaming service, a subaudible ping may play in the store’s background music that our phone mic “hears” and sends back to the advertiser. Now what we were listening to in the office has followed us to a physical place using our phones. We may receive an email or text offering a discount based on the predictions made from processing all of this personal data.

How Is Personal Data Processed?

Once personal data is collected as a part of an AI’s training corpus or in the queries put to the AI, it is likely “processed” under laws like the European Union’s General Data Protection Regulation (GDPR). The personal data used to train machine-learning models may introduce bias into the model. Bias is tightly associated with the notions of transparency and fairness in the GDPR and is governed by employment laws like Title VII of the Civil Rights Act of 1964 and US Equal Employment Opportunity Commission Guidance on AI and Title VII. Using personal data in a manner that results in biased predictions or outputs may violate privacy and employment laws. Also, the US Federal Trade Commission (FTC) has cautioned companies that AI that is unfair or deceptive will be subject to its Article 5 authority under the FTC Act. Companies that deploy AI must be transparent and clear when offering AI to consumers and must avoid conduct that could be considered deceptive.

Additionally, organizations need to be cautious with respect to the perceived tradeoff between privacy and AI utility (e.g., not providing end-to-end encryption, thus allowing the AI system to read users’ chat messages), because compliance sometimes unfortunately requires friction to protect individual rights. Moreover, trouble may ensue if personal data that was collected for one purpose is used for another (e.g., to train an AI model) without the individual’s consent to the secondary purpose. We recommend companies put together cross-functional program teams to develop policies and procedures to manage these complex and interrelated challenges.

How Is Personal Data Disseminated?

Organizations must be cautious about revealing or sharing personal data, entrusted to them by individuals, with third parties, including AI providers. For example, an organization may want to better understand and identify patterns in its vast amounts of data. The organization needs to assess the notice, transparency, fairness, (in some cases) consent, and secondary-use privacy and data protection requirements before sharing the information.

What Now?

Organizations must safeguard personal data in accordance with US state and federal privacy laws and, where applicable, extraterritorial laws like the GDPR. Government contractors making use of AI should consider the privacy protections in President Biden’s executive order on AI. We recommend that organizations “think twice” about how best to maximize the value of AI in a holistic way that addresses other compliance concerns as well. Building a multidisciplinary AI ethics and governance team that includes privacy, security, legal, and other stakeholders is a good place to start a larger AI compliance program. Companies may also want to consider engaging outside experts to help them navigate this complex and evolving space.